import urllib.request

import zipfile

import numpy as np

import os

from IPython.display import Image

import tensorflow as tf

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Flatten, Dropout, Dense

from tensorflow.keras.models import Sequential

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import matplotlib.pyplot as plt

%matplotlib inline

import cv2Layer (type) Output Shape Param #

%%javascript

IPython.OutputArea.auto_scroll_threshold = 50;_URL = 'https://storage.googleapis.com/mledu-datasets/cats_and_dogs_filtered.zip'

path_to_zip = tf.keras.utils.get_file('cats_and_dogs.zip', origin=_URL, extract=True)

PATH = os.path.join(os.path.dirname(path_to_zip), 'cats_and_dogs_filtered')Downloading data from https://storage.googleapis.com/mledu-datasets/cats_and_dogs_filtered.zip

68608000/68606236 [==============================] - 1s 0us/steptrain_path = os.path.join(PATH, 'train')

validation_path = os.path.join(PATH, 'validation')original_datagen = ImageDataGenerator(rescale=1./255)training_datagen = ImageDataGenerator(

rescale=1. / 255,

rotation_range=30,

width_shift_range=0.1,

height_shift_range=0.1,

shear_range=0.1,

zoom_range=0.1,

horizontal_flip=True,

fill_mode='nearest')original_generator = original_datagen.flow_from_directory(train_path,

batch_size=128,

target_size=(150, 150),

class_mode='binary'

)Found 2000 images belonging to 2 classes.training_generator = training_datagen.flow_from_directory(train_path,

batch_size=128,

shuffle=True,

target_size=(150, 150),

class_mode='binary'

)Found 2000 images belonging to 2 classes.validation_generator = training_datagen.flow_from_directory(validation_path,

batch_size=128,

shuffle=True,

target_size=(150, 150),

class_mode='binary'

)Found 1000 images belonging to 2 classes.class_map = {

0: 'Cats',

1: 'Dogs',

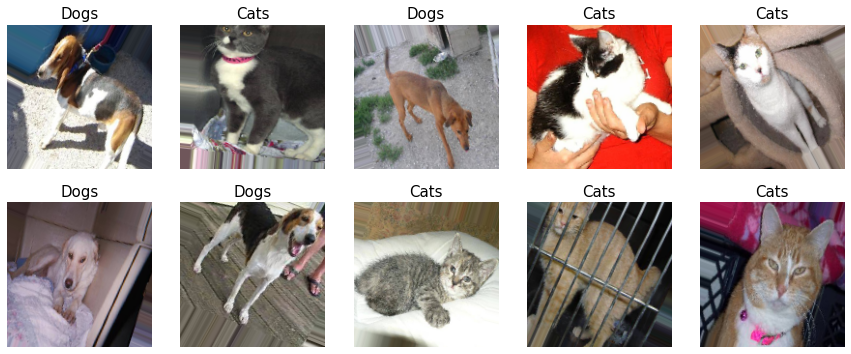

}print('오리지널 사진 파일')

for x, y in original_generator:

print(x.shape, y.shape)

print(y[0])

fig, axes = plt.subplots(2, 5)

fig.set_size_inches(15, 6)

for i in range(10):

axes[i//5, i%5].imshow(x[i])

axes[i//5, i%5].set_title(class_map[int(y[i])], fontsize=15)

axes[i//5, i%5].axis('off')

plt.show()

break

print('Augmentation 적용한 사진 파일')

for x, y in training_generator:

print(x.shape, y.shape)

print(y[0])

fig, axes = plt.subplots(2, 5)

fig.set_size_inches(15, 6)

for i in range(10):

axes[i//5, i%5].imshow(x[i])

axes[i//5, i%5].set_title(class_map[int(y[i])], fontsize=15)

axes[i//5, i%5].axis('off')

plt.show()

break오리지널 사진 파일

(128, 150, 150, 3) (128,)

0.0

Augmentation 적용한 사진 파일

(128, 150, 150, 3) (128,)

1.0

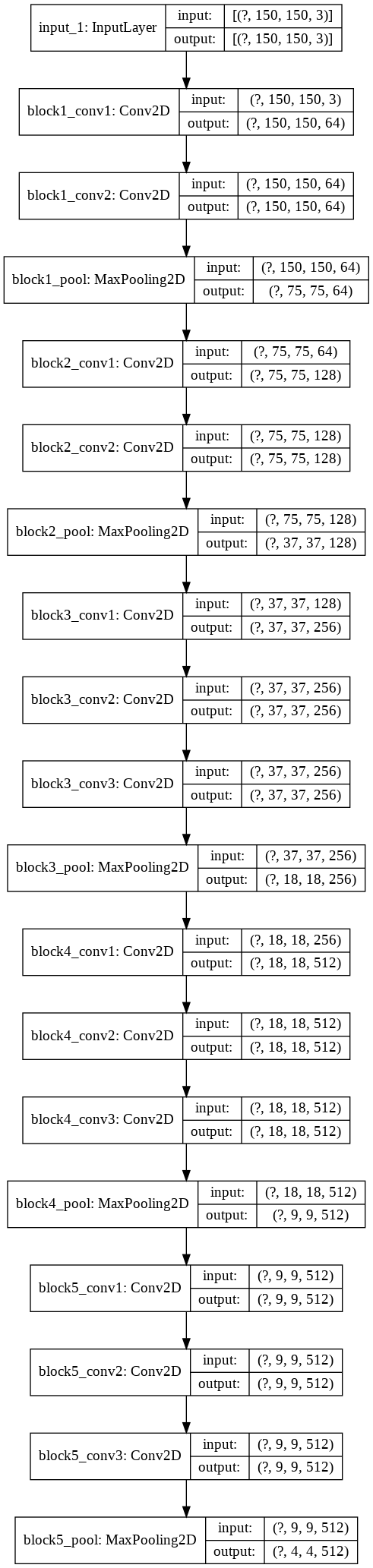

vgg_model=tf.keras.applications.VGG16(input_shape=(150,150,3),include_top=False)Downloading data from https://storage.googleapis.com/tensorflow/keras-applications/vgg16/vgg16_weights_tf_dim_ordering_tf_kernels_notop.h5

58892288/58889256 [==============================] - 1s 0us/steptf.keras.utils.plot_model(vgg_model,show_shapes=True)

vgg_model.trainable=Falsei=vgg_model.input

out=vgg_model.get_layer(index=-2).output

out=tf.keras.layers.GlobalAveragePooling2D()(out)

out=tf.keras.layers.Dense(2, activation='softmax')(out)

train_cam_model=tf.keras.Model(inputs=[i],outputs=[out])train_cam_model.summary()Model: "model"

_________________________________________________________________

input_1 (InputLayer) [(None, 150, 150, 3)] 0

_________________________________________________________________

block1_conv1 (Conv2D) (None, 150, 150, 64) 1792

_________________________________________________________________

block1_conv2 (Conv2D) (None, 150, 150, 64) 36928

_________________________________________________________________

block1_pool (MaxPooling2D) (None, 75, 75, 64) 0

_________________________________________________________________

block2_conv1 (Conv2D) (None, 75, 75, 128) 73856

_________________________________________________________________

block2_conv2 (Conv2D) (None, 75, 75, 128) 147584

_________________________________________________________________

block2_pool (MaxPooling2D) (None, 37, 37, 128) 0

_________________________________________________________________

block3_conv1 (Conv2D) (None, 37, 37, 256) 295168

_________________________________________________________________

block3_conv2 (Conv2D) (None, 37, 37, 256) 590080

_________________________________________________________________

block3_conv3 (Conv2D) (None, 37, 37, 256) 590080

_________________________________________________________________

block3_pool (MaxPooling2D) (None, 18, 18, 256) 0

_________________________________________________________________

block4_conv1 (Conv2D) (None, 18, 18, 512) 1180160

_________________________________________________________________

block4_conv2 (Conv2D) (None, 18, 18, 512) 2359808

_________________________________________________________________

block4_conv3 (Conv2D) (None, 18, 18, 512) 2359808

_________________________________________________________________

block4_pool (MaxPooling2D) (None, 9, 9, 512) 0

_________________________________________________________________

block5_conv1 (Conv2D) (None, 9, 9, 512) 2359808

_________________________________________________________________

block5_conv2 (Conv2D) (None, 9, 9, 512) 2359808

_________________________________________________________________

block5_conv3 (Conv2D) (None, 9, 9, 512) 2359808

_________________________________________________________________

global_average_pooling2d (Gl (None, 512) 0

_________________________________________________________________

dense (Dense) (None, 2) 1026

=================================================================

Total params: 14,715,714

Trainable params: 1,026

Non-trainable params: 14,714,688

_________________________________________________________________train_cam_model.compile(optimizer='adam', loss='sparse_categorical_crossentropy', metrics=['acc'])epochs=20history = train_cam_model.fit(training_generator,

validation_data=(validation_generator),

steps_per_epoch=len(training_generator),

validation_steps=len(validation_generator),

epochs=epochs,

)Epoch 1/20

16/16 [==============================] - 21s 1s/step - loss: 0.6727 - acc: 0.5845 - val_loss: 0.6606 - val_acc: 0.6030

Epoch 2/20

16/16 [==============================] - 21s 1s/step - loss: 0.6408 - acc: 0.7025 - val_loss: 0.6260 - val_acc: 0.7300

Epoch 3/20

16/16 [==============================] - 21s 1s/step - loss: 0.6156 - acc: 0.7395 - val_loss: 0.5999 - val_acc: 0.7600

Epoch 4/20

16/16 [==============================] - 21s 1s/step - loss: 0.5901 - acc: 0.7695 - val_loss: 0.5802 - val_acc: 0.7700

Epoch 5/20

16/16 [==============================] - 21s 1s/step - loss: 0.5711 - acc: 0.7750 - val_loss: 0.5620 - val_acc: 0.7760

Epoch 6/20

16/16 [==============================] - 21s 1s/step - loss: 0.5524 - acc: 0.7910 - val_loss: 0.5472 - val_acc: 0.7710

Epoch 7/20

16/16 [==============================] - 21s 1s/step - loss: 0.5376 - acc: 0.7880 - val_loss: 0.5356 - val_acc: 0.7890

Epoch 8/20

16/16 [==============================] - 20s 1s/step - loss: 0.5290 - acc: 0.7830 - val_loss: 0.5210 - val_acc: 0.7840

Epoch 9/20

16/16 [==============================] - 21s 1s/step - loss: 0.5118 - acc: 0.8075 - val_loss: 0.5110 - val_acc: 0.8000

Epoch 10/20

16/16 [==============================] - 21s 1s/step - loss: 0.5054 - acc: 0.8050 - val_loss: 0.5038 - val_acc: 0.8060

Epoch 11/20

16/16 [==============================] - 21s 1s/step - loss: 0.4954 - acc: 0.8160 - val_loss: 0.4976 - val_acc: 0.7960

Epoch 12/20

16/16 [==============================] - 21s 1s/step - loss: 0.4849 - acc: 0.8100 - val_loss: 0.4867 - val_acc: 0.7960

Epoch 13/20

16/16 [==============================] - 21s 1s/step - loss: 0.4777 - acc: 0.8090 - val_loss: 0.4768 - val_acc: 0.8140

Epoch 14/20

16/16 [==============================] - 21s 1s/step - loss: 0.4707 - acc: 0.8170 - val_loss: 0.4644 - val_acc: 0.8170

Epoch 15/20

16/16 [==============================] - 21s 1s/step - loss: 0.4674 - acc: 0.8140 - val_loss: 0.4639 - val_acc: 0.8210

Epoch 16/20

16/16 [==============================] - 21s 1s/step - loss: 0.4619 - acc: 0.8250 - val_loss: 0.4568 - val_acc: 0.8010

Epoch 17/20

16/16 [==============================] - 21s 1s/step - loss: 0.4515 - acc: 0.8225 - val_loss: 0.4496 - val_acc: 0.8150

Epoch 18/20

16/16 [==============================] - 21s 1s/step - loss: 0.4428 - acc: 0.8295 - val_loss: 0.4508 - val_acc: 0.8140

Epoch 19/20

16/16 [==============================] - 21s 1s/step - loss: 0.4419 - acc: 0.8300 - val_loss: 0.4461 - val_acc: 0.8100

Epoch 20/20

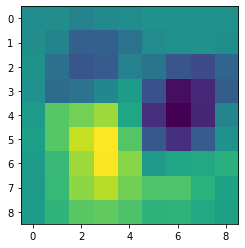

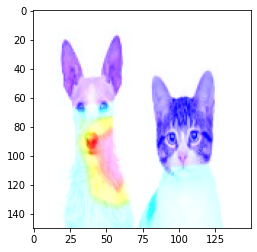

16/16 [==============================] - 21s 1s/step - loss: 0.4326 - acc: 0.8280 - val_loss: 0.4396 - val_acc: 0.8170cam_model = tf.keras.models.Model([train_cam_model.input], [train_cam_model.get_layer('block5_conv3').output,train_cam_model.output])class_weights = train_cam_model.layers[-1].get_weights()[0]class_num=1class_weights[:,class_num].shape(512,)img = cv2.imread('aa.jpg')img = cv2.resize(img, (150, 150))img=cv2.cvtColor(img,cv2.COLOR_BGR2RGB)plt.imshow(img)

conv_outputs, predictions = cam_model.predict(np.reshape(img,(1,150,150,3)))s=[]conv_outputs[0].shape(9, 9, 512)class_weights[100,class_num]0.0656074conv_outputs[0][:,:,100]array([[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 5.1452355, 0. , 0. , 0. ,

0. , 0. , 0. ]], dtype=float32)class_weights[100,class_num] * conv_outputs[0][:,:,100]array([[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. ],

[0. , 0. , 0.3375655, 0. , 0. , 0. ,

0. , 0. , 0. ]], dtype=float32)i=0

for w in class_weights[:,class_num]:

s.append(w * conv_outputs[0][:,:,i])

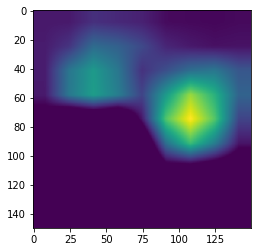

i=i+1s=np.array(s)s=np.sum(s,axis=0)s.shape(9, 9)plt.imshow(s)

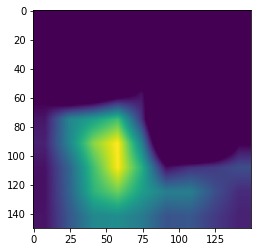

import cv2

cam = cv2.resize(s, (150, 150))

cam = np.maximum(cam, 0)

heatmap = (cam - cam.min()) / (cam.max() - cam.min())heatmaparray([[0. , 0. , 0. , ..., 0. , 0. ,

0. ],

[0. , 0. , 0. , ..., 0. , 0. ,

0. ],

[0. , 0. , 0. , ..., 0. , 0. ,

0. ],

...,

[0.05159856, 0.05159856, 0.05159856, ..., 0.09521208, 0.09521208,

0.09521208],

[0.05159856, 0.05159856, 0.05159856, ..., 0.09521208, 0.09521208,

0.09521208],

[0.05159856, 0.05159856, 0.05159856, ..., 0.09521208, 0.09521208,

0.09521208]], dtype=float32)plt.imshow(heatmap)

cam = cv2.applyColorMap(np.uint8(255*heatmap),cv2.COLOR_BGR2RGB)plt.imshow(cv2.add(cam,img))

class_num=0

s=[]

i=0

for w in class_weights[:,class_num]:

s.append(w * conv_outputs[0][:,:,i])

i=i+1

s=np.array(s)

s=np.sum(s,axis=0)

cam = cv2.resize(s, (150, 150))

cam = np.maximum(cam, 0)

heatmap = (cam - cam.min()) / (cam.max() - cam.min())

cam = cv2.applyColorMap(np.uint8(255*heatmap),cv2.COLOR_BGR2RGB)plt.imshow(heatmap)

plt.imshow(cv2.add(cam,img))

plt.imshow(img)