import torch

import numpy as np

import matplotlib.pyplot as plt샘플 데이터셋 생성

x = torch.tensor(np.arange(10))

y = 2*x + 1

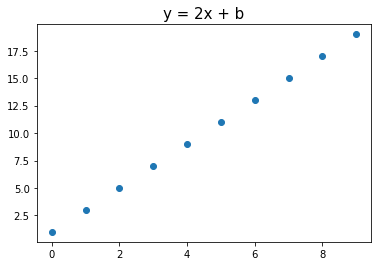

ytensor([ 1, 3, 5, 7, 9, 11, 13, 15, 17, 19])plt.title('y = 2x + b', fontsize=15)

plt.scatter(x, y)

plt.show()

# random w, b 생성

w = torch.randn(1, requires_grad=True)

b = torch.randn(1, requires_grad=True)

w, b(tensor([0.8925], requires_grad=True), tensor([1.2460], requires_grad=True))# Hypothesis Function 정의

y_hat = w*x + b# Mean Squared Error(MSE) 오차 정의

loss = ((y_hat - y)**2).mean()# BackPropagation (Gradient 계산)

loss.backward()# 결과 출력

print(f'w gradient: {w.grad.item():.2f}, b gradient: {b.grad.item():.2f}')w gradient: -60.91, b gradient: -9.48w, b 의 직접 계산한 Gradient와 비교

w_grad = (2*(y_hat - y)*x).mean().item()

b_grad = (2*(y_hat - y)).mean().item()print(f'w gradient: {w_grad:.2f}, b gradient: {b_grad:.2f}')w gradient: -60.91, b gradient: -9.48Gradient 계산 미적용

y_hat = w*x + b

print(y_hat.requires_grad)

with torch.no_grad():

y_hat = w*x + b

print(y_hat.requires_grad)True

False